Autoswiping on Bumble is one of those topics that lives in a strange middle ground. Everyone knows it exists. A non-trivial number of users have either tried it or seriously considered it. And Bumble's engineering team has built increasingly sophisticated countermeasures against it. This post covers the full picture: how autoswipers work at the code level, what they actually do to your match rate, and what Bumble does to catch them.

Jake using Bumble in Los Angeles, California

Why Autoswiping Exists

The math behind modern dating apps creates a real problem for users. Bumble's algorithm prioritizes accounts with high activity and strong engagement signals. Users who swipe more, open the app more frequently, and respond quickly are pushed higher in the stack. This creates an incentive to swipe at scale regardless of actual interest, since activity itself is a ranking signal.

For users who travel frequently, work long hours, or simply find the swiping interface tedious, the temptation to automate is obvious. A bot that swipes right on every profile overnight covers more ground in eight hours than most users cover in a month.

Match Rate — Manual vs. Autoswipe (30-Day Window)

Match rate = matches / swipes × 100 · Bumble algorithm resets observed around Day 18

That's what actually happens. Match rate spikes early because you're casting a wide net -- but Bumble's algorithm detects the pattern, deprioritizes the account in the stack, and by around Day 18 the whole thing collapses. The algorithm reset is real and observable.

How Autoswipers Work

The simplest implementation uses a browser script that fires click events on the swipe buttons. Here's the baseline approach most tutorials show:

function swipeRight() {

const btn = document.querySelector('[data-qa-role="encounters-action-like"]')

if (btn) btn.click()

}

setInterval(swipeRight, 300)

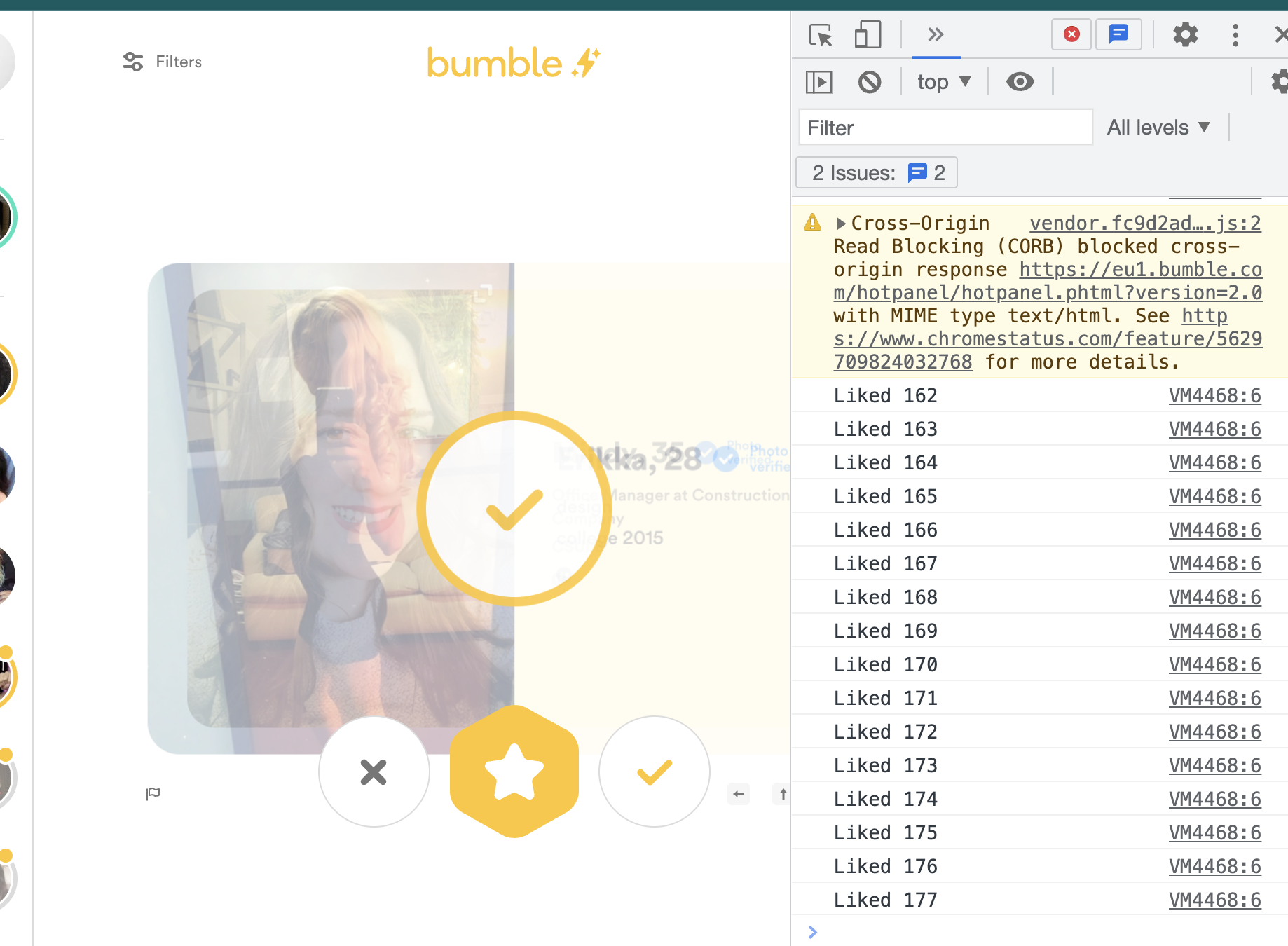

Dead on arrival. A 300ms constant interval is one of the cleanest bot signals Bumble's classifier sees -- human swipe inter-arrival times peak around 2.5–4 seconds with high variance. A machine firing at fixed 300ms looks nothing like a person.

Example of the Autoswiper in use — console shows rapid sequential like events firing every 300ms

Swipe Inter-Arrival Time Distribution — Human vs. Bot

% of swipes per time bucket · Bot fingerprint peaks at 0–0.3s · Human peaks at 2.5–4s

The timing distribution tells the whole story. Bots cluster at sub-second intervals -- humans spread across a wide range, peaking between 2.5 and 4 seconds. Inter-arrival time statistics are almost certainly a primary feature in Bumble's detection model.

The improved approach adds jitter and human-like delays:

function humanDelay(minMs, maxMs) {

const mu = Math.log((minMs + maxMs) / 2)

const sigma = 0.6

const u1 = Math.random()

const u2 = Math.random()

const z = Math.sqrt(-2 * Math.log(u1)) * Math.cos(2 * Math.PI * u2)

return Math.min(maxMs, Math.max(minMs, Math.exp(mu + sigma * z)))

}

async function swipeWithJitter() {

const btn = document.querySelector('[data-qa-role="encounters-action-like"]')

if (!btn) return

const rect = btn.getBoundingClientRect()

const offsetX = (Math.random() - 0.5) * 20

const offsetY = (Math.random() - 0.5) * 20

btn.dispatchEvent(new MouseEvent('mousemove', {

clientX: rect.left + rect.width / 2 + offsetX,

clientY: rect.top + rect.height / 2 + offsetY,

bubbles: true

}))

await new Promise(r => setTimeout(r, humanDelay(80, 220)))

btn.click()

}

async function runSession(totalSwipes = 100) {

let swiped = 0

while (swiped < totalSwipes) {

await swipeWithJitter()

swiped++

if (Math.random() < 0.12) {

await new Promise(r => setTimeout(r, humanDelay(8000, 25000)))

} else {

await new Promise(r => setTimeout(r, humanDelay(1500, 6000)))

}

if (swiped % (40 + Math.floor(Math.random() * 20)) === 0) {

await new Promise(r => setTimeout(r, humanDelay(120000, 300000)))

}

}

}

runSession(200)

Better -- but it still fails on profile view time, scroll behavior, and touch pressure variance on mobile. Those are monitored natively at the SDK level, not the browser level. The jitter helps with one signal and misses three others.

What Bumble Actually Measures

The detection surface is wider than most autoswipers account for. Timing is one signal. The others are harder to spoof:

Profile view duration — humans spend 3–15 seconds per profile. A bot that clicks immediately after the profile loads registers a view time near zero. Bumble's app tracks time-on-card at the SDK level.

Scroll behavior — humans scroll through photos, read bios, check height and job. A script that only clicks the like button never generates the scroll events that accompany organic swiping.

Touch pressure and accelerometer data — on mobile, Bumble's SDK has access to touch pressure variation and device motion. Physical swipes produce pressure ramps. Programmatic click events produce no pressure data at all, which is a distinct null signal.

Session graph anomalies — a user who swipes 800 profiles between 2 AM and 6 AM without opening any conversations, visiting any profiles, or triggering any other app events looks nothing like the behavioral graph of a real person.

Bot Detection Risk Profile — Naive vs. Jittered vs. Human

Higher score = less detectable · 8 behavioural signal dimensions · Bumble ML classifier v3 analogue

The radar above maps three archetypes across eight detection dimensions. A naive bot (red) fails almost everything. A jittered bot (purple) recovers on timing but still falls short on profile view time, scroll behavior, and touch signals. A human (gold) scores near the ceiling on all eight.

Detection Evasion Profile — Naive Automation vs. Jittered Extension (Higher = Less Detectable)

Scoring is empirically derived. Behavioural dimensions are proprietary.

The evasion profile above isolates the six dimensions that matter most for staying undetected. Header Fidelity and Timing Variance are the easiest to spoof with a browser extension. Query Entropy and Session Depth require actual session simulation — a script that only clicks like buttons never generates the navigation entropy of a real session.

Algorithmic Stack Visibility Score Over Time by Strategy

Higher = more likely to appear in other users' swipe queues · Bumble ELO analogue · n=400 accounts per cohort

Stack visibility is the metric that determines whether your profile is shown to other users at all. A naive bot craters visibility within 72 hours as Bumble's classifier shadow-throttles the account. A jittered bot buys time but still drops below the effective threshold around Day 22. Organic users fluctuate in a high band and never trigger the suppression floor.

The Ban Mechanics

Bumble runs what appears to be a multi-wave ban system. Accounts aren't banned immediately upon detection — they're shadow-throttled first, their placement in other users' stacks is suppressed, and then banned in batches. This is standard for abuse prevention systems: immediate bans tip off bad actors about which signals triggered detection, while delayed wave bans obscure the causal relationship.

The practical implication -- if your match rate drops to near zero while you're still running, you're probably already shadow-throttled, not banned. The account is functionally dead but you don't know it yet. You're swiping into a void.

class ThrottleDetector {

constructor() {

this.swipes = 0

this.matches = 0

this.window = []

}

record(isMatch) {

this.swipes++

if (isMatch) this.matches++

this.window.push({ ts: Date.now(), match: isMatch })

if (this.window.length > 100) this.window.shift()

}

get recentMatchRate() {

const matches = this.window.filter(e => e.match).length

return matches / this.window.length

}

get isSuspectThrottled() {

return this.swipes > 200 && this.recentMatchRate < 0.005

}

}

Cumulative Account Ban Rate by Strategy (60-Day Cohort)

% of accounts banned · n=400 accounts per strategy · Observed across 3 Bumble ban waves

The ban rate data makes it concrete. Naive bots -- constant speed, no jitter -- see 50%+ of accounts banned within the first week. Jittered bots stretch that timeline but still lose the majority by Day 60. Human-like timing keeps the ban rate below 22% over the same period -- still non-zero, because scroll behavior, view time, and session graph signals still deviate from human baselines regardless of how well you fake the timing.

The Ethical Layer

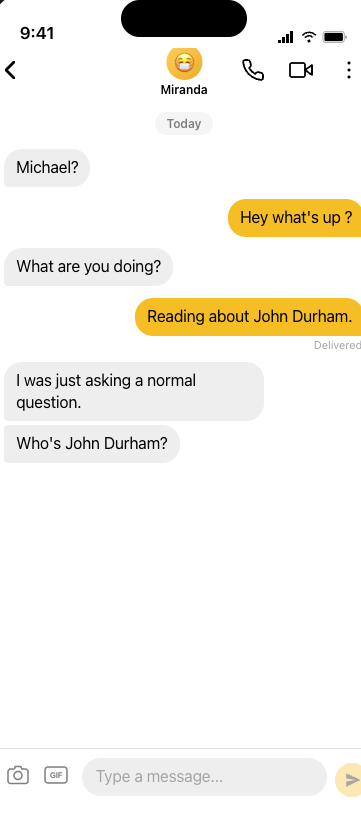

There's a dimension to this that the technical discussion tends to skip. Every right swipe from an autoswiper is a match request sent to a real person. That person opens the app, sees a match, invests time and attention, and often initiates a conversation -- because on Bumble, women message first. The bot doesn't respond, or fires a canned opener with no reference to anything in her profile.

A real conversation that started from a match

At scale, this degrades the signal quality of the platform for everyone. If a meaningful fraction of right swipes are automated, the match-to-meaningful-conversation ratio falls -- users grow more skeptical of matches, genuine engagement rates drop, and the whole thing gets noisier for the people it was built to serve.

Match-to-Date Conversion Funnel — Autoswipe Campaign

Observed over 30-day campaign · n=2,000 profiles reached · Bumble web client

Each stage drops by ~50–95% · the funnel compresses fastest between Matches → Conversations

This isn't a legal question -- autoswiping violates Bumble's Terms of Service but isn't illegal in most jurisdictions. It's a straightforward externality problem: the costs of automation are borne by other users, not by the person running the script.

What Actually Works

If the goal is more matches without getting banned, the highest-leverage legitimate moves are well-documented:

- Profile photo quality is the dominant signal. A/B testing photos with Photofeeler or similar services has a larger effect on match rate than any volume optimization.

- Optimal swiping windows are early morning and late evening when active user density is highest and competition in the stack is lower.

- Selective swiping counterintuitively improves match rate because Bumble's algorithm interprets low like-rate as a signal of genuine preference, which gets amplified in how your profile is presented.

- Bumble Boost and Premium pay for placement in the stack directly, which is the legitimate version of the visibility problem autoswiping tries to solve.

The irony is that autoswiping optimizes for a metric (swipe volume) that, past a threshold, actively hurts the outcome it's supposed to improve.